Weekend Reading #75

Weekend Reading: A weekly roundup of interesting Software Architecture and Programming articles from tech companies. Find fresh ideas and insights every weekend.

This week: featuring MCP protocol deep dive, Airbnb's dynamic configuration platform Sitar, Pinterest's 96% reduction in Spark OOM errors with Auto Memory Retries, and Lyft's LLM-powered localization pipeline for faster internationalization.

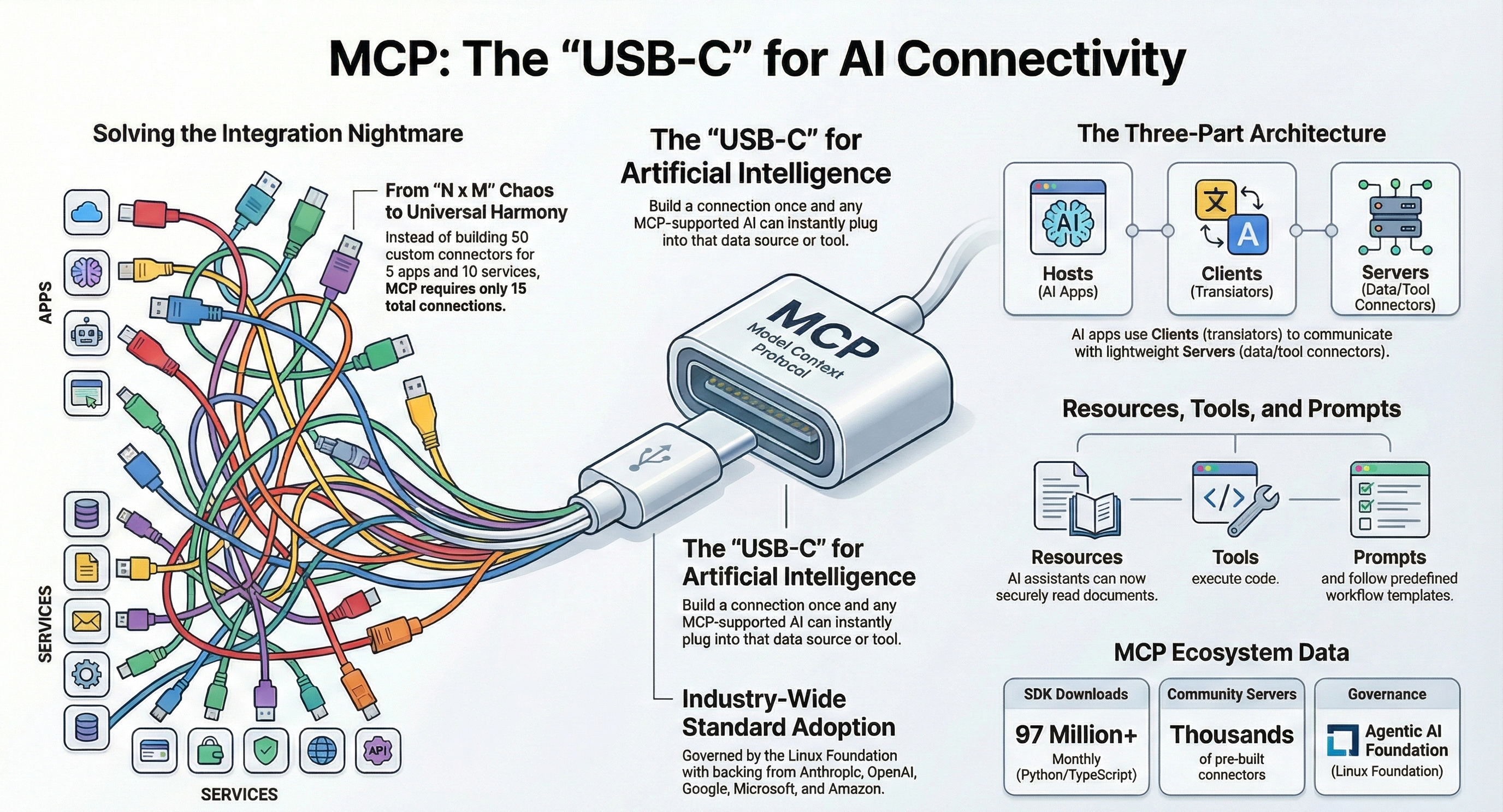

What is the Model Context Protocol (MCP)

👉 Backend developers, AI/ML engineers, and anyone building integrations between AI models and external tools or data sources

https://bool.dev/blog/detail/model-context-protocol

A comprehensive overview of MCP — the open standard (often called "USB-C for AI") that lets AI models connect to external tools and data sources through a universal protocol, eliminating the need for custom integrations. The article covers architecture and security considerations and includes a step-by-step guide to building your own MCP server.

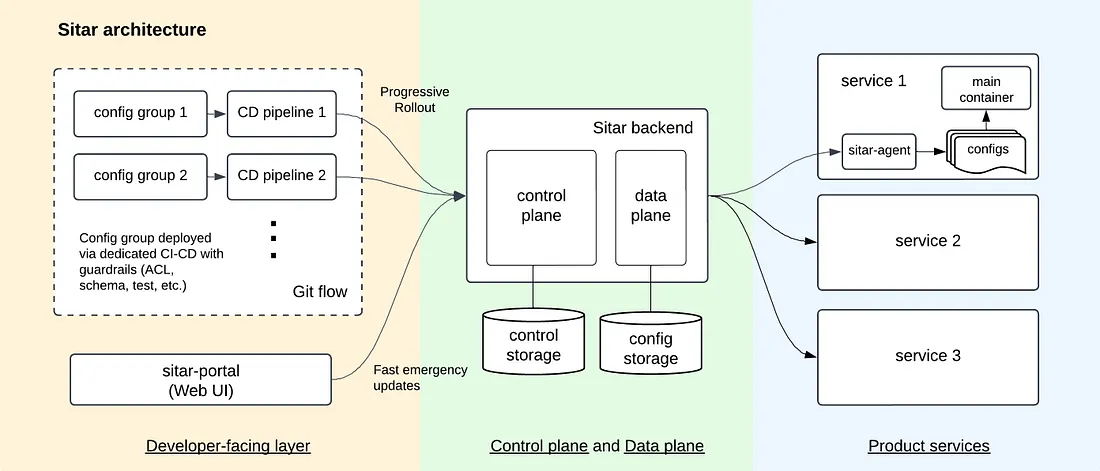

Safeguarding Dynamic Configuration Changes at Scale

👉 Useful for platform engineers, SREs, infrastructure engineers, and backend developers working with dynamic configuration in distributed systems

https://bool.dev/l/1815

Airbnb shares the architecture of their internal dynamic config platform "Sitar," which enables safe runtime behavior changes without redeployments. The post covers their Git-based workflow, staged rollouts with fast rollbacks, separated control/data planes, and local caching for resilience.

Drastically Reducing Out-of-Memory Errors in Apache Spark at Pinterest

👉 For Data engineers, platform engineers, and anyone running large-scale Apache Spark workloads

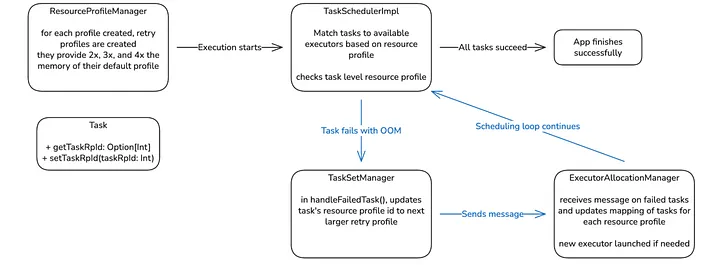

Pinterest engineered an "Auto Memory Retries" feature that automatically identifies memory-heavy Spark tasks and retries them on larger executors, reducing OOM failures by 96% across their 90k+ daily Spark jobs. The team plans to contribute this feature upstream to Apache Spark.

https://bool.dev/l/1816

Scaling Localization with AI at Lyft

👉 For Localization engineers, product managers handling internationalization, and engineering teams exploring LLM-powered translation workflows

https://bool.dev/l/1817

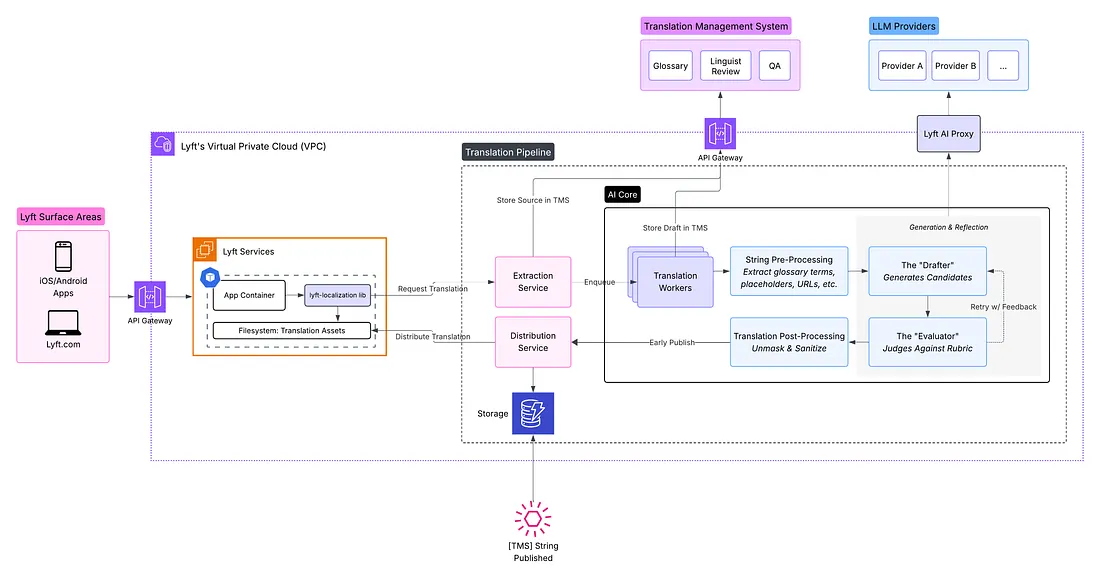

Lyft replaced its multi-day human-only translation pipeline with a dual-path architecture where LLM-generated translations ship immediately to unblock launches, while linguists review them asynchronously. This approach was critical to expanding into new markets and to complying with language legislation such as Québec's Bill 96.