Weekend Reading #79

Weekend Reading: A weekly roundup of interesting Software Architecture and Programming articles from tech companies. Find fresh ideas and insights every weekend.

This week: Pinterest traces the evolution of their feed re-ranking from DPP to Sliding Spectrum Decomposition, showing why diversity drives long-term retention. Airbnb shares a battle-tested migration from StatsD to OpenTelemetry with a dual-write approach that cut metrics CPU overhead by 10x. Uber optimized Petastorm to resolve a GPU utilization bottleneck, slashing training time from 22 hours to 3 hours. And Netflix details the architecture behind their multimodal video search, unifying character, scene, and dialogue models into a real-time creative discovery tool.

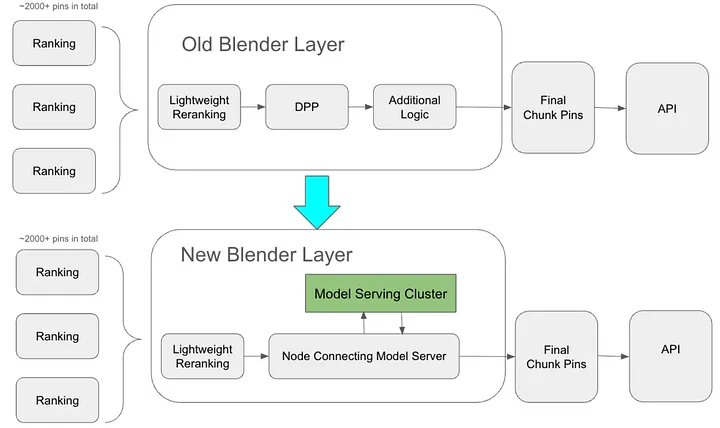

Evolution of Multi-Objective Optimization at Pinterest Home Feed

👉 For ML engineers, recommendation systems engineers, and anyone working on feed ranking or re-ranking layers

Pinterest walks through years of evolution in their feed re-ranking layer — from DPP-based diversification to Sliding Spectrum Decomposition — showing how balancing short-term engagement with long-term user satisfaction is critical. Removing diversity caused immediate engagement spikes but negative retention by week two.

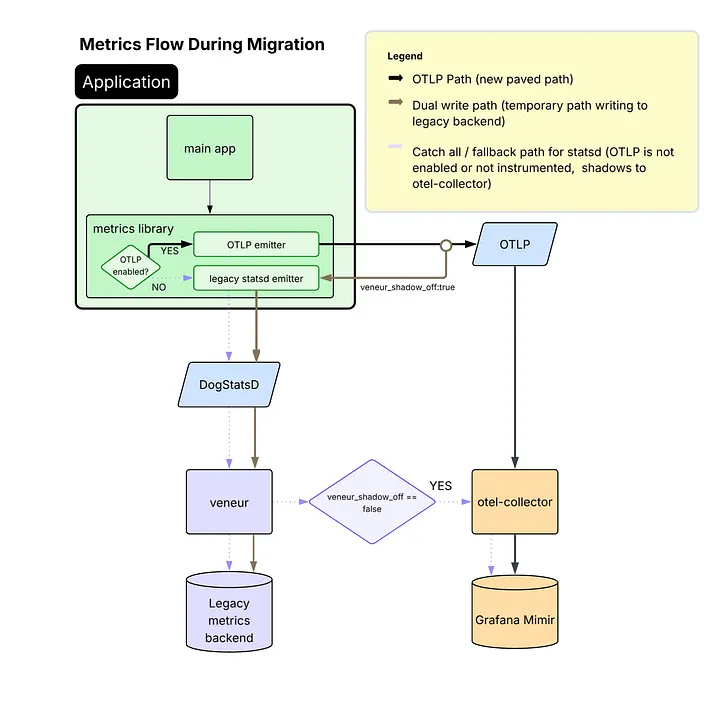

Building a High-Volume Metrics Pipeline with OpenTelemetry and vmagent

👉 Useful for platform engineers, SREs, and infrastructure teams migrating observability stacks or scaling metrics pipelines

Airbnb shares their production-tested migration from StatsD/Veneur to OpenTelemetry and Prometheus, including a dual-write strategy, handling high-cardinality metrics with delta temporality, and dropping CPU time spent on metrics processing from 10% to under 1%.

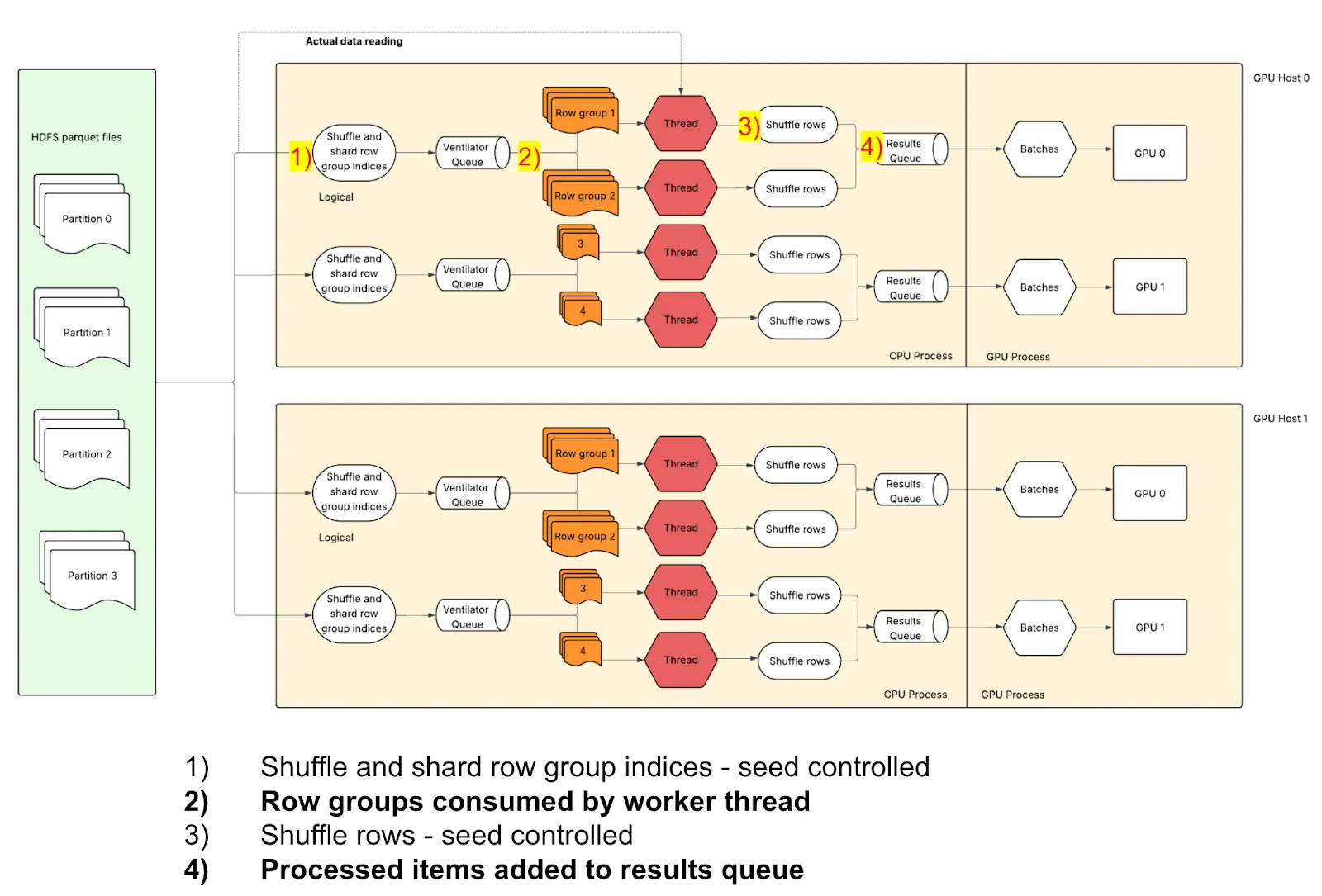

Accelerating Deep Learning: How Uber Optimized Petastorm for High-Throughput and Reproducible GPU Training

👉 For ML infrastructure engineers, deep learning engineers, and teams working on large-scale distributed training pipelines

Uber tackled a critical data-loading bottleneck in their training pipeline, where GPUs sat at 10-15% utilization while waiting for data. By optimizing Petastorm, they pushed GPU utilization to 60%+, cut training time from 22 hours to 3, and reduced compute costs by nearly 80% — while also eliminating hidden sources of non-reproducibility.

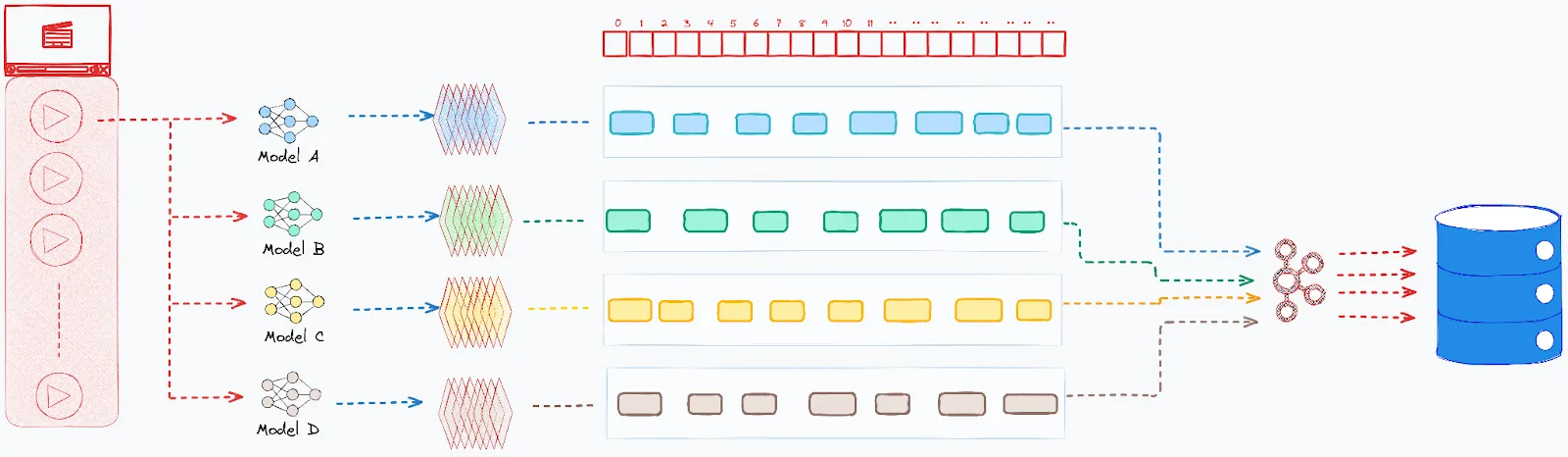

Synchronizing the Senses: Powering Multimodal Intelligence for Video Search

👉 For ML engineers, search engineers, and media/content platform teams building multimodal search or video understanding systems

Netflix built a multimodal video search engine that fuses outputs from specialized models (character recognition, scene detection, dialogue parsing) into one-second temporal buckets, enabling editorial teams to search across thousands of hours of footage with complex cross-annotation queries at scale.