Weekend Reading #77

Weekend Reading: A weekly roundup of interesting Software Architecture and Programming articles from tech companies. Find fresh ideas and insights every weekend.

This week: a comprehensive .NET testing guide covering everything from unit test fundamentals to gRPC contract testing. Netflix introduces MediaFM, their first tri-modal foundation model for deep content understanding. LinkedIn reveals how LLM embeddings and Generative Recommender models are powering the next generation of feed ranking for 1.3 billion users. And Pinterest walks through building a full MCP ecosystem — from registry and security to 66K monthly invocations, saving thousands of engineering hours.

Part 10: Testing – C# / .NET Interview Questions and Answers

👉 For .NET developers at all levels preparing for interviews or looking to level up their testing practices

A massive, well-structured guide covering testing in .NET from fundamentals to advanced topics: unit vs. integration tests, test doubles, Testcontainers, gRPC testing, contract testing, and common antipatterns. Each question is split by junior/middle/senior expectations with code examples.

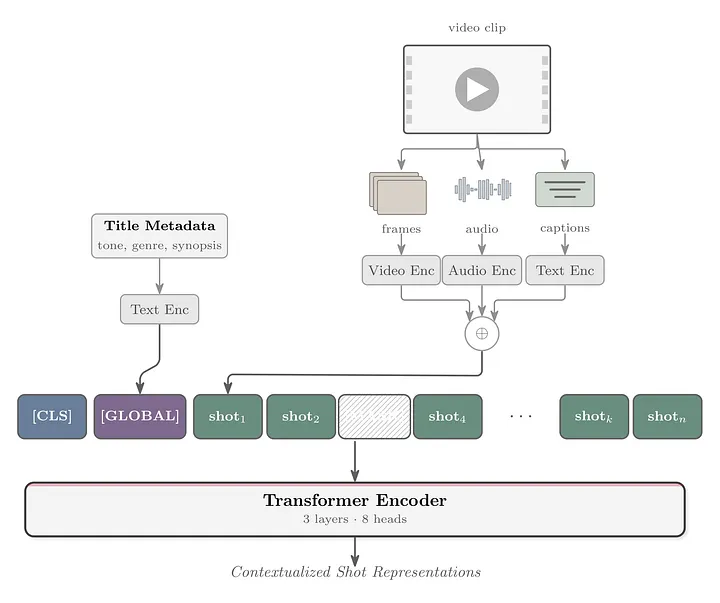

MediaFM: The Multimodal AI Foundation for Media Understanding

👉 For ML engineers, AI researchers, and teams working on multimodal models or content understanding at scale

Netflix built MediaFM, their first tri-modal (audio, video, text) foundation model, pretrained on portions of the Netflix catalog. The Transformer-based encoder generates shot-level embeddings that power use cases like ad relevance, clip tagging, and cold-start recommendations for new titles.

Engineering the Next Generation of LinkedIn's Feed

👉 For ML engineers, recommendation systems engineers, and anyone interested in large-scale feed ranking and retrieval systems

LinkedIn replaced its fragmented multi-pipeline retrieval system with a unified approach powered by LLM embeddings and a Generative Recommender model that processes 1,000+ historical interactions per user. The new architecture serves 1.3 billion professionals with more contextually aware, semantically driven feed personalization.

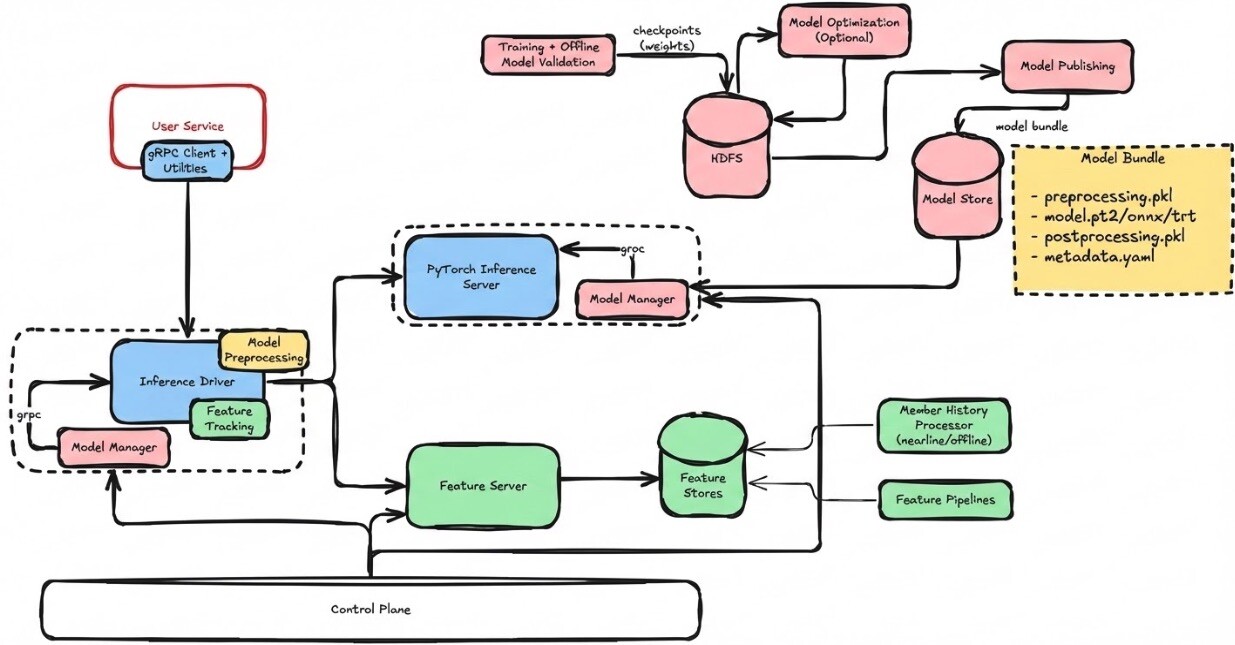

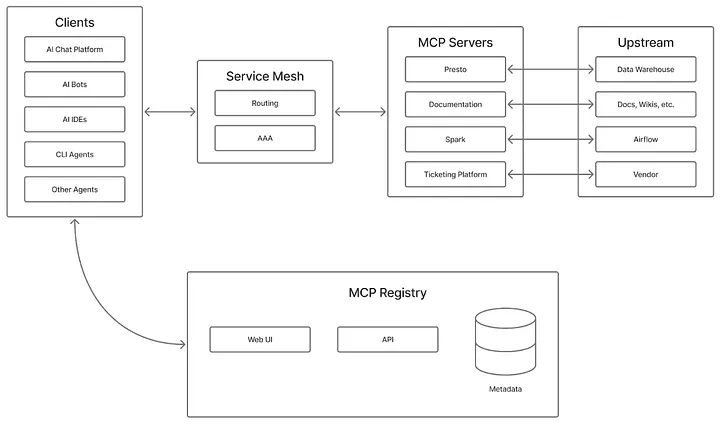

Building an MCP Ecosystem at Pinterest

👉 For platform engineers, developer productivity teams, and anyone building internal AI agent infrastructure with MCP

Pinterest shares how they went from exploring MCP to running a production ecosystem of cloud-hosted domain-specific MCP servers with a central registry, JWT-based auth, and integrations across IDEs and internal chat. The system now handles 66,000 invocations per month, saving an estimated 7,000 engineering hours monthly.